Networking concepts

From Gender and Tech Resources

Distant, abstract and idealised, principles have been drafted, ideas floated, and suggestions made about how best to use the enormous, networked tool. But the very idea of "neutrality" where "communications" and "investment" come together (these two not only get together in the FCC, they get together in lots of standardisation committees run by military and corporate interests), where information is key and the battle for access fundamental, suggest the fictional character of the effort.

Dandy it may be to speak about such "access" entitlements to internet power, till one realises the range of forces at work seeking to limit and restrict its operations. They come from governments and their agencies. They come from companies and their subsidiaries. The internet, in other words, is simply another territory of conflict, and one filled with fractious contenders vying for the shortest lived of primacies. Forget neutrality – it was never there to begin with. Just ask the lawyers getting their briefs ready for the next round of dragging litigation. ~ The FCC, the Internet and Net Neutrality [1]

Note: On this page I frequently use the word digital device. A computer is a digital device. So is a router.

Contents

- 1 Network topology

- 2 Hardware connections

- 3 TCP/IP ports and addresses

- 4 Network protocol levels

- 5 Data link layer

- 6 TCP/IP network protocols

- 7 Network devices

- 8 ARP and RARP address translation

- 9 Network address translation (NAT)

- 10 Basic TCP/IP addressing

- 11 Internet Protocol (IP)

- 12 Transmission Control Protocol (TCP)

- 13 User Datagram Protocol (UDP)

- 14 Internet Control Message Protocol (ICMP)

- 15 Simple routing

- 16 Mesh network routing

- 17 Anonimising proxies

- 18 Tunneling

- 19 SSH tunneling

- 20 Virtual Private Network (VPN)

- 21 DNS leaks

- 22 Mix networks

- 23 Tor onion routing

- 24 I2P garlic routing

- 25 Resources

- 26 Related

- 27 References

Network topology

A network consists of multiple digital devices connected using some type of interface, each having one or more interface devices such as a Network Interface Card (NIC) and/or a serial device for PPP networking. Each digital device is supported by network software that provides server and/or client functionality.

Centralised vs Distributed

We can make distinctions in type of networks according to centralisation vs distribution:

- In a server based network, some devices are set up to be primary providers of services. These devices are called servers and the devices that request and use the service are called clients.

- In a peer-to-peer (p2p) network, various devices on the network can act both as clients and servers. Like a network of switchers. :D

Social p2p processes are interactions with a peer-to-peer dynamic. Peers can both be a device or a human. The term comes from the P2P distributed computer application architecture which partitions tasks or workloads between peers. P2P has inspired new structures and philosophies in many areas of human interaction. Its human dynamic affords a critical look at current authoritarian and centralized social structures. Peer-to-peer is also a political and social program for those who believe that in many cases, peer-to-peer modes are a preferable option.

Flat vs Hierarchical

In general there are two fundamental design relationships that can be identified in the construction of a network infrastructure: flat networks versus hierarchical networks. In a flat network every device is directly reachable by every other device. In a hierarchical network the world is divided into separate locations and devices are assigned to a specific location. The advantage of hierarchical design is that devices interconnecting the parts of the infrastructure need only know how to reach intended destinations without having to keep track of individual devices at each location. Routers make forwarding decisions by looking at that part of the station address that identifies the location of the destination.

Physical wiring

The network topology describes the method used to do the physical wiring of the network. The main ones are:

- Bus networks (not to be confused with the system bus of a computer) use a common backbone to connect all devices. Both ends of the network must be terminated with a terminator. A barrel connector can be used to extend it. A device wanting to communicate with another device on the network sends a broadcast message onto the wire that all other devices see, but only the intended recipient actually accepts and processes the message. Bus networks are limited in the number of devices it can serve due to the broadcast traffic it generates.

- Ring networks connecting from one to another in a ring. Every device has exactly two neighbors. A data token is used to grant permission for each computer to communicate. All messages travel through a ring in the same direction, either "clockwise" or "counterclockwise". A failure in any cable or device breaks the loop and can take down the entire network, so there are also rings that have doubled up on networking hardware and information travels both "clockwise" and "counterclockwise".

- Star networks using a central connection point called a "hub node", a network hub, switch or router, that controls the network communications. Most home networks are of this type. Star networks are limited in number of hub connection points.

- Tree networks join multiple star topologies together onto a bus. In its simplest form, only hub devices connect directly to the tree bus, and each hub functions as the root of a tree of devices.

- Mesh networks use routes. Unlike the previous topologies, messages sent on a mesh network can take any of several possible paths from source to destination. Most prominent example is the internet.

+-----+ +-----+ +-----+

+-----+ +-----+ +-----+ | |--------| | | |

| | | | | | +-----+ +-----+ +-----+

+-----+ +-----+ +-----+ / \ |

| | | +-----+ +-----+ +-----+ +-----+ +-----+

----------------------------- | | | | | |---------| |---------| |

| | | +-----+ +-----+ +-----+ +-----+ +-----+

+-----+ +-----+ +-----+ \ / / \

| | | | | | +-----+ +-----+ +-----+ +-----+

+-----+ +-----+ +-----+ | |--------| | | | | |

+-----+ +-----+ +-----+ +-----+

Bus topology Ring topology Star topology

Hardware connections

Network interface card (NIC)

A Network Interface Card (NIC) is a circuit board or chip which allows the computer to communicate with other computers. This board when connected to a cable or other method of transferring data such as infrared or ISM bands can share resources, information and computer hardware. Using network cards to connect to a network allows users to share data such as collectives being able to have the capability of having a library, receive e-mail internally within the collective or share hardware devices such as printers.

Each network interface card (NIC) has a built in hardware address programmed by its manufacturer. This is a 48 bit address and should be unique for each card. This address is called a media access control (MAC) address.

Network cabling

You can connect two digital devices (computers) together with a cross-over cable between their network cards, not a straight network jumper cable (otherwise the transmit port would be sending to the transmit port on the other side).

Common network cable types:

- In Twisted Pair cables, wire is twisted to minimize crosstalk interference. It may be shielded or unshielded.

- Unshielded Twisted Pair (UTP)

- Shielded twisted pair (STP)

- Coaxial cables are two conductors separated by insulation. Coax cable types of intrest:

- RG-58 A/U - 50 ohm, with a stranded wire core.

- RG-58 C/U - Military version of RG-58 A/U.

- With Fiber-optic cables data is transmitted using light rather than electrons. Usually there are two fibers, one for each direction. It is not subject to interference. Two types of cables are:

- Single mode cables for use with lasers.

- Multimode cables for use with Light Emitting Diode (LED) drivers.

Hubs and switches

A network hub is a hardware device to connect network devices together. The devices will all be on the same network and/or subnet. All network traffic is shared and can be sniffed by any other node connected to the same hub.

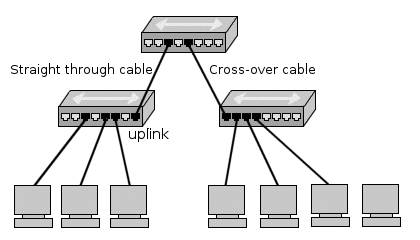

An uplink is a connection from a device or smaller local network to a larger network. Uplink does not have a crossover connection and is designed to fit into a crossover connection on the next hub. This way you can keep linking hubs to put more computers on a network. Because each hub introduces some delay onto the network signals, there is a limit to the number of hubs you can sequentially link. Also the computers that are connected to the two hubs are on the same network and can talk to each other. All network traffic including all broadcasts is passed through the hubs.

A network switch is like a hub but creates a private link between any two connected nodes when a network connection is established. This reduces the amount of network collisions and thus improves speed. Broadcast messages are still sent to all nodes.

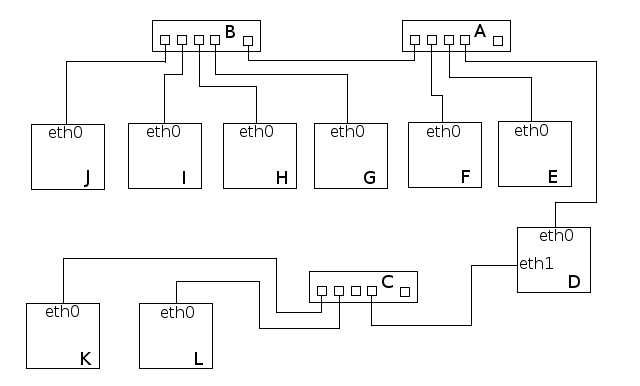

If you have a machine (device, computer) with two network cards, eth0, connected to an outbound hub, and eth1, connected to another hub that only connects to local machines, and it is not configured as router or bridge, the two networks are considered separated. If no other machines on the network that the eth0 card is connected to is an outbound device, all devices in that network are dependent.

Wireless media

Transmission of waves take place in the electromagnetic (EM) spectrum. The carrier frequency of the data is expressed in cycles per second called hertz(Hz). Low frequency signals can travel for long distances through many obstacles but can not carry a high bandwidth of data. High frequency signals can travel for shorter distances through few obstacles and carry a narrow bandwidth. Also the effect of noise on the signal is inversely proportional to the power of the radio transmitter, which is normal for all FM transmissions. The three broad categories of wireless media are:

Radio frequency (RF) refers to frequencies of radio waves. RF is part of electromagnetic spectrum that ranges from 3 Hz - 300 GHz. Radio wave is radiated by an antenna and produced by alternating currents fed to the antenna. RF is used in many standard as well as proprietary wireless communication systems. RF has long been used for radio and TV broadcasting, wireless local loop, mobile communications, and amateur radio. It is broken into many bands including AM, FM, and VHF bands. The Federal communications Commission (FCC) regulates the assignment of these frequencies. Frequencies for unregulated use are:

- 902 - 928Mhz - Cordless phones, remote controls.

- 2.4 Ghz

- 5.72 - 5.85 Ghz

Microwave is the upper part of RF spectrum, i.e. those frequencies above 1 GHz. Because of the availability of larger bandwidth in microwave spectrum, microwave is used in many applications such as wireless PAN (Bluetooth), wireless LAN (Wi-Fi), broadband wireless access or wireless MAN (WiMAX), wireless WAN (2G/3G cellular networks), satellite communications and radar. But it became a household name because of its use in microwave oven.

- Terrestrial - Used to link networks over long distances but the two microwave towers must have a line of sight between them. The signal is normally encrypted for privacy.

- Satellite - A satellite orbits at 22,300 miles above the earth which is an altitude that will cause it to stay in a fixed position relative to the rotation of the earth. This is called a geosynchronous orbit. A station on the ground will send and receive signals from the satellite. The signal can have propagation delays between 0.5 and 5 seconds due to the distances involved.

Infrared light is part of electromagnetic spectrum that is shorter than radio waves but longer than visible light. Its frequency range is between 300 GHz and 400 THz, that corresponds to wavelength from 1mm to 750 nm. Infrared has long been used in night vision equipment and TV remote control. Infrared is also one of the physical media in the original wireless LAN standard, that's IEEE 802.11. Infrared use in communication and networking was defined by the Infrared Data Association (IrDA). Using IrDA specifications, infrared can be used in a wide range of applications, e.g. file transfer, synchronization, dial-up networking, and payment. However, IrDA is limited in range (up to about 1 meter). It also requires the communicating devices to be in LOS and within its 30-degree beam-cone. A light emitting diode (LED) or laser is used to transmit the signal. The signal cannot travel through objects. Light may interfere with the signal. Some types of infared are:

- Point to point - Transmission frequencies are 100GHz-1,000THz . Transmission is between two points and is limited to line of sight range. It is difficult to eavesdrop on the transmission.

- Broadcast - The signal is dispersed so several units may receive the signal. The unit used to disperse the signal may be reflective material or a transmitter that amplifies and retransmits the signal. Installation is easy and cost is relatively inexpensive for wireless.

LAN radio communications

- Low power, single frequency is susceptible to interference and eavesdropping.

- High power, single frequency requires FCC licensing and high power transmitters. It is susceptible to interference and eavesdropping.

- Spread spectrum uses several frequencies at the same time. Two main types are:

- In Direct sequence modulation the data is broken into parts and transmitted simultaneously on multiple frequencies. Decoy data may be transmitted for better security.

- In Frequency hopping the transmitter and receiver change predetermined frequencies at the same time (in a synchronized manner).

TCP/IP ports and addresses

The part of the network that does the job of transporting and managing the data across the "normal" internet is called TCP/IP which stands for Transmission Control Protocol (TCP) and Internet Protocol (IP). The IP layer requires a 4 (IPv4) or 6 (IPv6) byte address to be assigned to each network interface card on each computer. This can be done automatically using network software such as Dynamic Host Configuration Protocol (DHCP) or by manually entering static addresses.

Port numbers

The TCP layer requires what is called a port number to be assigned to each message. This way it can determine the type of service being provided. These are not ports that are used for serial and parallel devices or for computer hardware control, but reference numbers used to define a service (RFC 6335).

Addresses

Addresses are used to locate computers, almost like a house address. Each IP address is written in what is called dotted decimal notation. This means there are four numbers, each separated by a dot. Each number represents a one byte value with a possible range of 0-255.

Network protocol levels

Protocols are sets of standards that define all operations within a network and how devices outside the network can interact with the network. Protocols define everything: basic networking data structures, higher level application programs, services and utilities.

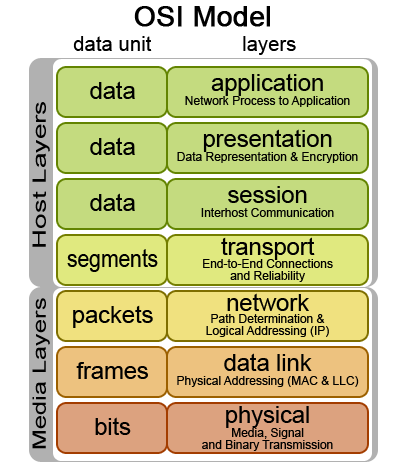

The International Standards Organization (ISO) has defined the Open Systems Interconnection (OSI) model for current networking protocols, commonly referred to as the ISO/OSI model (ISO standard 7498-1). It is a hierarchical structure of seven layers that defines the requirements for communications between two computers. It was conceived to allow interoperability across the various platforms offered by vendors. The model allows all network elements to operate together, regardless of who built them. By the late 1980's, ISO was recommending the implementation of the OSI model as a networking standard. By that time, TCP/IP had been in use for years. TCP/IP was fundamental to ARPANET and the other networks that evolved into the internet. For differences between TCP/IP and ARPANET, see RFC 871. Only a subset of the whole OSI model is used today.

RFCs

Protocols are outlined in Request for Comments (RFCs). The RFCs central to the TCP/IP protocol:

- RFC 1122 - Defines host requirements of the TCP/IP suite of protocols covering the link, network (IP), and transport (TCP, UDP) layers.

- RFC 1123 - The companion RFC to 1122 covering requirements for internet hosts at the application layer

- RFC 1812 - Defines requirements for internet gateways which are IPv4 routers

ISO/OSI model

7. The Application layer provides a user interface by interacting with the running application. Examples of application layer protocols are Telnet, File Transfer Protocol (FTP), Simple Mail Transfer Protocol (SMTP) and Hypertext Transfer Protocol (HTTP).

6. The Presentation layer transforms data it receives from and passes on to the Application layer and Session layer. MIME encoding, data compression, data encryption and similar manipulations of the presentation are done at this layer. Examples: converting an EBCDIC-coded text file to an ASCII-coded file or from a .wav to .mp3 file, or serializing objects and other data structures into and out of XML. This layer makes the type of data transparent to the layers around it.

5. The Session layer establishes, manages and terminates the connections between local and remote applications. The OSI model made this layer responsible for "graceful close" of sessions (a property of TCP), and session checkpointing and recovery (usually not used in the internet protocol suite). It provides for duplex or half-duplex operation, dialog control (who transmits next), token management (who is allowed to attempt a critical action next) and establishes checkpointing of long transactions so they can continue after a crash, adjournment, termination, and restart procedures.

- Full Duplex allows the simultaneous sending and receiving of packets.

- Half Duplex allows the sending and receiving of packets in one direction at a time only.

4. The Transport layer provides end-to-end delivery of data between two nodes and is responsible for the delivery of a message from one process to another. It converts messages into Transmission Control Protocol (TCP), User Datagram Protocol (UDP), Stream Control Transmission Protocol (SCTP), etc. Some protocols are state and connection oriented, allowing the transport layer to keep track of the packets and retransmit those that fail: It divides data into different segments before transmitting it. On receipt of these segments, the data is reassembled and forwarded to the next layer. If the data is lost in transmission or has errors, then this layer recovers the lost data and transmits the same.

3. The Network layer translates the network address into a physical MAC address. It performs network routing, flow control, segmentation/desegmentation, and error control functions. The best known example of a layer 3 protocol is the Internet Protocol (IP).

2. The Data Link layer is responsible for moving frames from one hop (node) to the next. The main function of this layer is to convert the data packets received from the upper layer(s) into frames, to establish a logical link between the nodes, and to transmit the frames sequentially. The addressing scheme is physical as MAC addresses are hard-coded into network cards at the time of their manufacture. The best known example is data transfer method (802x ethernet). IEEE divided this layer into the two following sublayers:

- Logical Link Control (LLC) maintains the link between two computers by establishing Service Access Points (SAPs) which are a series of interface points. See IEEE 802.2.

- Media Access Control (MAC) is used to coordinate the sending of data between computers. See the IEEE 802.3, 4, 5, and 12 standards.

1. The Physical layer coordinates the functions required to transmit a bit stream over a physical medium. It defines all the electrical and physical specifications for devices. This includes layout of pins, voltages, cable specifications, etc. Hubs, repeaters and network adapters are physical-layer devices. Popular protocols at this layer are Fast Ethernet, ATM, RS232, etc. The major functions and services performed by the physical layer are:

- Establishment and termination of a connection to a device.

- Participation in the process whereby resources are effectively shared among multiple users.

- Modulation, or conversion between the representation of digital data in user equipment and the corresponding signals transmitted over a channel. These are signals operating over the physical cabling or over a radio link.

TCP/IP model

4. The Application layer includes all the higher-level protocols such as TELNET, FTP, DNS SMTP, SSH, etc. The TCP/IP model has no session or presentation layer. Its functionalities are folded into its application layer, directly on top of the transport layer.

+--------------------+

| Application data | Application packet

+--------------------+

3. The Transport layer provides datagram services to the Application layer. This layer allows host and destination devices to communicate with each other for exchanging messages, irrespective of the underlying network type. Error control, congestion control, flow control, etc., are handled by the transport layer. The protocols used are the Transmission Control Protocol (TCP) and User Datagram Protocol (UDP). TCP gives a reliable, end-to-end, connection-oriented data transfer, while UDP provides unreliable, connectionless data transfers.

The data necessary for these functions is added to the packet. This process of "wrapping" the application data is called data encapsulation.

+-----------------------------------+

| TCP header | Application data | TCP packet

+-----------------------------------+

2. The Internet layer (alias Network layer) routes data to its destination. Data received by the link layer is made into data packets (IP datagrams), containing source and the destination IP address or logical address. These packets are sent and delivered independently (unordered). Protocols at this layer are Internet Protocol (IP), Internet Control Message Protocol (ICMP), etc.

+-------------------------------------------------+

| IP header | TCP header | Application data | IP packet

+-------------------------------------------------+

1. The Network Interface layer combines OSI's Physical and Data Link layers.

+---------------------------------------------------------------------+

| Ethernet header | IP header | TCP header | Application data | Ethernet packet

+---------------------------------------------------------------------+

Data link layer

The IEEE 802 standards define the two lowest levels of the seven layer network model and primarily deal with the control of access to the network media [2]. The network media is the physical means of carrying the data such as network cable. The control of access to the media is called media access control (MAC).

Network access methods

All clients talking at once doesn't work. What ways have been developed sofar to avoid this?

- Contention

- Carrier-Sense Multiple Access with Collision Detection (CSMA/CD) used by ethernet

- Carrier-Sense Multiple Access with Collision Avoidance (CSMA/CA)

- Token Passing - A token is passed from one computer to another, which provides transmission permission.

- Demand Priority - Describes a method where intelligent hubs control data transmission. A computer will send a demand signal to the hub indicating that it wants to transmit. The hub sill respond with an acknowledgement that will allow the computer to transmit. The hub will allow computers to transmit in turn.

- Polling - A central controller, also called the primary device will poll computers, called secondary devices, to find out if they have data to transmit. Of so the central controller will allow them to transmit for a limited time, then the next device is polled.

Ethernet uses CSMA/CD, a method that allows network stations to transmit any time they want. Network stations sense the network line and detect if another station has transmitted at the same time they did. If such a collision happened, the stations involved will retransmit at a later, randomly set time in hopes of avoiding another collision.

Data encapsulation

1. One computer requests to send data to another over a network.

2. The data message flows through the Application layer by using a TCP or UDP port to pass onto the Internet layer. The Transport layer may have one of two names, segment or datagram. If the TCP protocol is being used, it is called a segment. If the UDP protocol is being used, it is called a datagram.

3. The data segment obtains logical addressing at the Internet layer via the IP protocol, and the data is then encapsulated into a datagram. The requirements for IP to link layer encapsulation for hosts on a Ethernet network are:

- All hosts must be able to send and receive packets defined by RFC 894.

- All hosts should be able to receive a mix of packets defined by RFC 894 and RFC 1042.

- All hosts may be able to send RFC 1042 defined packets.

Hosts that support both must provide a means to configure the type of packet sent and the default must be packets defined by RFC 894.

4. The datagram enters the Network Access layer, where software will interface with the physical network. A data frame encapsulates the datagram for entry onto the physical network. At the end of the process, the frame is converted to a stream of bits that is then transmitted to the receiving computer.

5. The receiving computer removes the frame, and passes the packet onto the Internet layer. The Internet layer will then remove the header information and send the data to the Transport layer. Likewise, the Transport layer removes header information and passes data to the final layer. At this final layer the data is whole again, and can be read by the receiving computer if no errors are present.

Encapsulation formats

Ethernet (RFC 894) message format:

+--------------------------------------------------------------------------------------------------------------------+

| preamble | destination address | source address | type | application, transport and network data | CRC | Ethernet packet

+--------------------------------------------------------------------------------------------------------------------+

- 8 bytes for preamble

- 6 bytes for destination address

- 6 bytes for source address.

- 2 bytes of message type indicating the type of data being sent

- 46 to 1500 bytes of data. The maximum length of an Ethernet frame is 1526 bytes. This means a data field length of up to 1500 bytes.

- 4 bytes for cyclic redundancy check (CRC) information

IEEE 802 (RFC 1042) message format:

+------------------------------------------------------------------------------------------------------------------------+

| preamble | SFD | destination address | source address | length | DSAP | SSAP | control | info | FCS | IEEE 802.3 packet

+------------------------------------------------------------------------------------------------------------------------+

IEEE 802.3 Media Access Control section used to coordinate the sending of data between computers:

- 7 bytes for preamble

- 1 byte for the start frame delimiter (SFD)

- 6 bytes for destination address

- 6 bytes for source address

- 2 bytes for length - The number of bytes that follow not including the CRC.

Additionally, IEEE 802.2 Logical Link control establishes service access points (SAPs) between computers:

- 1 byte destination service access point (DSAP)

- 1 byte source service access point (SSAP)

- 1 byte for control

Followed by Sub Network Access Protocol (SNAP):

- 3 bytes for org code.

- 2 bytes for message type which indicates the type of data being sent

- 38 to 1492 bytes of data

- 4 bytes for cyclic redundancy check (CRC) information named frame check sequence (FCS)

Trailor encapsulation

This link layer encapsulation is described in RFC 1122 and RFC 892. It is not used very often today and may be very interesting for some further experimentation with.

TCP/IP network protocols

The Transmission Control Protocol/Internet Protocol (TCP/IP) uses a client - server model for communications. The protocol defines the data packets transmitted (packet header, data section), data integrity verification (error detection bytes), connection and acknowledgement protocol, and re-transmission.

TCP/IP Time To Live (TTL) is a counting mechanism to determine how long a packet is valid before it reaches its destination. Each time a TCP/IP packet passes through a router it will decrement its TTL count. When the count reaches zero the packet is dropped by the router. This ensures that errant routing and looping aimless packets will not flood the network.

TDP/IP includes a wide range of protocols which are used for a variety of purposes on the network. The set of protocols that are a part of TCP/IP is called the TCP/IP protocol stack or the TCP/IP suite of protocols https://en.wikipedia.org/wiki/Internet_protocol_suite.

| ISO/OSI | TCP/IP | TCP/IP protocol examples |

|---|---|---|

| Application, session, presentation | Application | NFS, NIS, DNS, RPC, LDAP, telnet, ftp, rlogin, rsh, rcp, RIP, RDISC, SNMP, and others |

| Transport | Transport | TCP, UDP, SCTP |

| Network | Internet | IPv4, IPv6, ARP, ICMP |

| Data link | Data link | PPP, IEEE 802.2, HDLC, DSL, Frames, Network Switching, MAC address |

| Physical | Physical network | Ethernet (IEEE 802.3), Token Ring, RS-232, FDDI, and others |

Application protocols

FTP, TFTP, SMTP, Telnet, NFS, ping, rlogin provide direct services to the user. DNS provides address to name translation for locations and network cards. RPC allows remote computer to perform functions on other computers. RARP, BOOTP, DHCP, IGMP, SNMP,RIP, OSPF, BGP, and CIDR enhance network management and increase functionality.

- Hypertext Transfer Protocol (HTTP) is the protocol that facilitates transfer of data. Typically, data is transferred in the form of pages, or HTML markup. HTTP operates on TCP port 80.

- Secure HTTP (HTTPS) uses TCP port 443 to securely transfer HTTP data via SSL, or Secure Socket Layer. TLS is the newer SSL.

- File Transfer Protocol (FTP) operates on TCP ports 20 (data)/21(transmission control). It is used in simple file transfers from one node to another without any security (transferred in cleartext). Secure (SFTP) is a version of FTP that uses SSH to transfer data securely, using whichever port SSH uses (usually 22).

- Trivial FTP (TFTP) is a UDP version of FTP that uses UDP port 69. It is called "trivial" because it is relatively unreliable and inefficient and so is more often used for inter-network communication between routers.

- Telnet (Telecommunications Network) is an old protocol used to remotely connect to a node. All communications with telnet are in cleartext (even passwords for authentication). Telnet operates on TCP port 23. Except for in lab situations, no longer in use.

- Secure Shell (SSH) is a secure replacement of Telnet. SSH allows terminal emulation in cipher text, which equates to enhanced and increased security. SSH usually operates on TCP port 22.

- Network News Transfer Protocol (NNTP) is a protocol used by client and server software to carry USENET (newsgroup) postings back and forth over a TCP/IP network. NNTP operates on TCP port 119.

- Lightweight Directory Access Protocol (LDAP) is a "Directory Services" protocol allowing a server to act as a central directory for client nodes. LDAP operates on TCP and UDP port 389.

- Network Time Protocol (NTP) allows for synchronizing network time with a server. NTP operates on UDP port 123.

- Post Office Protocol (POP3) is the mailbox protocol allowing users to download mail from a mail server. Once you access it, your client software will download all of your incoming mail and wipe it from the server. POP3 operates on TCP port 110.

- The Internet Message Access Protocol (IMAP) allows for server-based repositories of sent mail and other specialized folders. When using IMAP4 instead of POP3 as your incoming mail protocol, you download very minimal information to your local machine and when you want to access actual incoming mail, you are pulling this directly from the mail server. This allows you to access your mail from virtually anywhere. IMAP4 operates on TCP port 143.

- Simple Mail Transfer Protocol (SMTP) used in conjunction with POP3 or IMAP4 allows for sending/receiving of email. Without it you will only be able to receive mail. SMTP operates on TCP port 25.

- Domain Name System (DNS) resolves easy to read domain names into computer readable IP addresses and operates on UDP port 53.

- Simple Network Management Protocol (SNMP) manages devices on IP networks, such as modems, switches, routers, or printers. Default works on UDP port 161.

Transport protocols

Controls the management of service between computers. Based on values in TCP and UDP messages a server knows what service is being requested.

- Transmission Control Protocol (TCP) is a reliable connection oriented protocol used to control the management of application level services between computers.

- User Datagram Protocol (UDP) is an unreliable connection less messaging protocol used to control the management of application level services between computers.

- The Stream Control Transmission Protocol (SCTP) is message-oriented like UDP and ensures reliable, in-sequence transport of messages with congestion control like TCP. In the absence of native SCTP support in operating systems it is possible to tunnel SCTP over UDP, as well as mapping TCP API calls to SCTP calls.

Internet protocols

ARP communicates between layers to allow one layer to get information to support another layer. This includes broadcasting. IP and ICMP manage movement of messages and report errors (including routing).

- Internet Protocol (IP) provides the mechanism to use software to address and manage data packets being sent to computers. Except for ARP and RARP all protocols' data packets will be packaged into an IP data packet.

- Address resolution protocol (ARP) enables packaging of IP data into ethernet packages. It is the system and messaging protocol that is used to find the ethernet (hardware) address from a specific IP number. Without it, the ethernet package can not be generated from the IP package, because the ethernet address can not be determined.

- Internet Control Message Protocol (ICMP) provides management and error reporting to help manage the process of sending data between computers.

Network interface protocols

Allows messages to be packaged and sent between physical locations.

- Serial line IP (SLIP) is a form of data encapsulation for serial lines.

- Point to point protocol (PPP) is a form of serial line data encapsulation that is an improvement over SLIP.

- Ethernet provides transport of information between physical locations on ethernet cable. Data is passed in ethernet packets.

Network devices

Repeater

As signals travel along a network cable (or any other medium of transmission), they degrade and become distorted in a process that is called attenuation. If a cable is long enough, the attenuation will finally make a signal unrecognizable by the receiver. A repeater retimes and regenerates the signals to proper amplitudes and sends them to the other segments, enabling signals to travel longer distances over a network.

To pass data through the repeater in a usable fashion from one segment to the next, the packets and the Logical Link Control (LLC) protocols must be the same on the each segment. This means that a repeater will not enable communication, for example, between an 802.3 segment (Ethernet) and an 802.5 segment (Token Ring). Repeaters do not translate anything.

Bridge

Bridges work at the Data Link layer. This means that all information contained in the higher levels of the OSI model is unavailable to them, including IP addresses. Bridges read the outermost section of data on the data packet to tell where a message is going.

Bridges do not distinguish between one protocol and another and simply pass all protocols along the network. Because all protocols pass across the bridges, it is up to the individual computers to determine which protocols they can recognise. As traffic passes through the bridge, information about the computer addresses is then stored in the bridge's RAM. The bridge will then use this RAM to build a routing table based on source (MAC) addresses. To determine the network segment a MAC address belongs to, bridges use one of:

- In Transparent Bridging a table of addresses (bridging table) is built as they receive packets. If the address is not in the bridging table, the packet is forwarded to all segments other than the one it came from. This type of bridge is used on ethernet networks.

- In Source Route Bridging the source computer provides path information inside the packet. This is used on Token Rings.

Bridges can be used to:

- Expand the distance of a segment.

- Provide for an increased number of computers on the network.

- Reduce traffic bottlenecks resulting from an excessive number of attached computers.

Router

In an environment consisting of several network segments with different protocols and architecture, a bridge may not be adequate for ensuring fast communication among all of the segments. A complex network needs a device, which not only knows the address of each segment, but also can determine the best path for sending data and filtering broadcast traffic to the local segment. Such device is called a router. Routers work at the Network layer of the OSI model meaning that the Routers can switch and route packets across multiple networks.

A router is used to route data packets between two networks. It reads the information in each packet to tell where it is going. If it is destined for an immediate network it has access to, it will strip the outer packet, readdress the packet to the proper ethernet address, and transmit it on that network. If it is destined for another network and must be sent to another router, it will re-package the outer packet to be received by the next router and send it to the next router.

Gateway

Gateways make communication possible between different architectures and environments. They repackage and convert data going from one environment to another so that each environment can understand the other's environment data. Most gateways operate at the application layer, but can operate at the network or session layer of the OSI model.

Gateways strip information until getting to the required level, repackages the information to match the requirements of the destination system, and works its way back toward the hardware layer of the OSI model: It decapsulates incoming data through the networks complete protocol stack and encapsulates the outgoing data in the complete protocol stack of the other network to allow transmission. A gateway links two systems that do not use the same:

- Communication protocols

- Data formatting structures

- Languages

- Architecture

ARP and RARP address translation

Address resolution refers to the determination of the address of a device from the address of that equipment to another protocol level, for example, an IP address in an Ethernet address.

The Address Resolution Protocol (ARP) provides a completely different function to the network than Reverse Address Resolution Protocol (RARP). ARP is used to resolve the ethernet address of a NIC from an IP address in order to construct an ethernet packet around an IP data packet. This must happen in order to send any data across the network. Reverse Address Resolution protocol (RARP) is used for diskless computers to determine their IP address using the network.

In IPv6, ARP and RARP are replaced by a neighbor discovery protocol called Neighbor Discovery (ND), which is a subset of the control protocol Internet Control Message Protocol (ICMP).

ARP

A Media Access Control address (MAC Address) is the network card address used for communication between other network devices on the subnet. This information is not routable. The ARP table maps a (global internet) TCP/IP address to the local hardware on the local network. The MAC address uniquely identifies each node of a network and is used by the Ethernet protocol.

To determine a recipient's physical address, a device broadcasts an ARP request on the subnet that contains the IP address to be translated. The machine with the relevant IP address responds with its physical address.

To make ARP more efficient, each machine maintains in memory a table of addresses resolved and thus reduces the number of Broadcast emissions.

RARP

The RARP mechanism allows a device to be identified as a target on the network by broadcasting a RARP request. The servers receiving the message examine their table and meet. Once the IP address obtained, the machine stores it in memory and no longer uses RARP until it is reset.

Network address translation (NAT)

IP-Masquerading translates internal IP addresses into external IP addresses. This is called Network Address Translation (NAT). From the outside world, all connections will seem to be originating from the one external address.

One to one NAT

1:1 NAT (Network Address Translation) is a mode of NAT that maps one internal address to one external address. For example, if a network has an internal server at 192.168.1.10, 1:1 NAT can map 192.168.1.10 to 1.2.3.4 where 1.2.3.4 is an additional external IP address provided by an internet service provider (ISP).

One to many NAT

The majority of NATs map multiple private hosts to one publicly exposed IP address. In a typical configuration, a local network uses one of the designated "private" IP address subnets (RFC 1918). A router on that network has a private address in that address space. The router is also connected to the internet with a "public" IP address assigned by an internet service provider (ISP). As traffic passes from the local network to the internet, the source address in each packet is translated on the fly from a private address to the public address. The router tracks basic data about each active connection (particularly the destination address and port). When a reply returns to the router, it uses the connection tracking data it stored during the outbound phase to determine the private address on the internal network to which to forward the reply.

Static NAT

Most NAT devices allow for configuring static translation table entries for connections from the external network to the internal masqueraded network. This feature is often referred to as static NAT. It may be implemented in two types: port forwarding which forwards traffic to a specific external port to an internal host on a specified port, and designation of a DMZ host which receives all traffic received on the external interface on any port number to an internal IP address, preserving the destination port. Both types may be available in the same NAT device.

Basic TCP/IP addressing

An IP Address is a logical numeric address that is assigned to every single computer, printer, switch, router or any other device that is part of a TCP/IP-based network.

Until the introduction of Classless Inter-Domain Routing (CIDR) in 1993 to slow the growth of routing tables on routers across the internet, and to help slow the rapid exhaustion of IPv4 addresses, classful networks were used. You can still find it in tutorials, some networks, and in archeological artifacts such as default subnet mask. In classful adresses, the first one or two bytes (depending on the class of network), generally will indicate the number of the network, the third byte indicates the number of the subnet, and the fourth number indicates the host number.

Most of servers and personal computers use Internet Protocol version 4 (IPv4). This uses 32 bits to assign a network address as defined by the four octets of an IP address, up to 255.255.255.255. Each octet is converted to a decimal number (base 10) from 0–255 and separated by a period (a dot). This format is called dotted decimal notation. If not familiar with number conversions, a decent tutorial can be found in http://www.cstutoringcenter.com/tutorials/general/convert.php

For example the IPv4 address:

11000000101010000000001100011000

is segmented into 8-bit blocks:

11000000 | 10101000 | 00000011 | 00011000

Each block is converted to decimal:

27 + 26 | 27 + 25 + 23 | 21 + 20 | 24 + 23

128 + 64 = 192 | 128 + 32 + 8 = 168 | 2 + 1 = 3 | 16 + 8 = 24

The adjacent octets 192, 168, 3 and 24 are separated by a period:

192.168.3.24

Internet Protocol version 6 (IPv6) was designed to answer the future exhaustion of the IPv4 address pool. IPv4 address space is 32 bits which translates to just above 4 billion addresses. IPv6 address space is 128 bits translating to billions and billions of potential addresses. The protocol has also been upgraded to include new quality of service features and security, but also has its vulnerabilities [3] [4]. IPv6 addresses are represented as eight groups of four hexadecimal digits with the groups being separated by colons, for example 2805:F298:0004:0148:0000:0000:0740:F5E9, but methods to abbreviate this full notation exist http://www.vorteg.info/ipv6-abbreviation-rules/.

Routing tables

To minimise unnecessary traffic load and provide efficient movement of frames from one location to another, interconnected hosts are grouped into separate networks. As a result of this grouping (determined by network design and administration functions) it is possible for a router to determine the best path between two networks. A router forms the boundary between one network and another network. When a frame crosses a router it is in a different network. A frame that travels from source to destination without crossing a router has remained in the same network. A network is a group of communicating machines bounded by routers.

The router will use some of the bits in the IP address to identify the network location to which the frame is destined. The remaining bits in the address will uniquely identify the host on that network that will ultimately receive the frame. There are bits to identify the network and to identify the host. The sender of a frame must make this differentiation because it must decide whether it is on the same network as the destination or on a different network

- If the sender is on the same network as the destination, it will determine the data link address of the destination machine. Then it will send the frame directly to the destination machine.

- If the destination is on a different then the originator must send the frame to a router and let the next router in line forward the frame on to the ultimate destination network. At the ultimate destination network the last router must determine the data link address of the host and forward the frame directly to that host on that ultimate destination network.

When a router receives an incoming data frame, it masks the destination address to create a lookup key that is compared to the entries in its routing table. The routing table indicates how the frame should be processed. The frame might be delivered directly on a particular port on the router. The frame might have to be sent on to the next router in line for ultimate delivery to some remote network.

The routing table is created by the combination of direct configuration by an administrator or dynamically through the periodic broadcasting of router update frames. Protocol frames from Routing Information Protocol (RIP), Open Shortest Path First (OSPF) and Internet Gateway Routing Protocol (IGRP) are sent from all routers at periodic intervals. As a result, all routers become aware of how to reach all other networks.

The specific behavior that is expected from an IP router is discussed in RFC 1812, a TL;DR (lengthy, like this page) document providing a complete discussion of routing in the IPv4 network environment.

Subnet masking

The IP Address Mask is a configuration parameter used by a TCP/IP end-node and IP router to differentiate between that part of the IP address that represents the network and the part that represents the host.

A router uses the mask value to create a key value that is looked up in the router table to determine where to forward a frame. An end-node uses the mask value to create the same key value but the value is used to compare the destination address with the end-node address to determine whether the destination is directly reachable (on the same network) or remote (in which case the frame must be sent to a router and can not be sent directly to the destination).

The mask value can be assigned by default or it can be specified by the installer of the end-node or router software. The destination IP address and the mask value are combined with a Boolean AND operation to produce the resultant key value. Just in case, for a start in boolean algebra, see http://www.i-programmer.info/babbages-bag/235-logic-logic-everything-is-logic.html

For example:

An end-node is assigned the IP address 164.25.74.131 and a mask value of 255.255.0.0. This end-node wants to send a frame to 164.7.9.2.

- If 164.7.9.2 is on the same network as 164.25.74.131 then the end-node will broadcast an ARP (Address Resolution Protocol) frame to determine the data link address of the destination and it will then send the frame directly to the destination.

- If 164.7.9.2 is on a different network then the workstation must send the frame to a router for forwarding to the ultimate destination network.

All the dotted-decimal notation must be converted to the underlying 32-bit binary numbers to understand what is taking place:

End node 164.25.74.131 = 10100100 00011001 01001010 10000011

Mask 255.255.0.0 = 11111111 11111111 00000000 00000000

Destination 164.7.9.2 = 10100100 00000111 00001001 00000010

When the IP address of the end node is AND'ed with the mask we get:

End node 164.25.74.131 = 10100100 00011001 01001010 10000011

Mask 255.255.0.0 = 11111111 11111111 00000000 00000000

-------------------------------------------------------------------------------------

Boolean AND = 10100100 00011001 00000000 00000000

In dotted-decimal = 164. 25. 0. 0

When the destination IP address is AND'ed with the mask we get:

Destination 164.7.9.2 = 10001100 00000111 00001001 00000010

Mask 255.255.0.0 = 11111111 11111111 00000000 00000000

-------------------------------------------------------------------------------------

Boolean AND = 10001100 00000111 00000000 00000000

(In dotted-decimal) = 164. 7. 0. 0

Since the results (164.25.0.0 and 164.7.0.0) are not equal, the end node concludes that the destination must be on a different network and the frame is sent to a router. A router masks the destination address in an incoming frame and the result is used as a lookup key in the routing table.

Address classes

The origins of the current implementation of the Internet Protocol (IPv4) and its associated classes of IP addressing can be found in RFC 791: IP addresses were to be of fixed, 32-bit (4 octets) length comprised of a Network Number and a Local Address or Host Number. The resulting range of addresses were then divided into three broad groupings or “Classes”, based on the bit values within the first octet:

- Class A: high order bit is “0”, the remaining 7 bits are the network, and the last 24 bits are the host.

- Class B: high order two bits are “10”, the remaining 14 bits are the network, and the last 16 bits are the host.

- Class C: high order three bits are “110”, the remaining 21 bits are the network, and the last 8 bits are the host.

Two additional classes of IPv4 addressing, Class D & E that were specified in subsequent RFC’s:

- Class D: high order four bits are “1110”, the remaining 20 bits identify the multicast group.

- Class E: high order five bits are “11110”, the remaining bits are reserved for experimental use.

Implied within RFC 791, was the concept of Masking, to be used by routers and hosts:

- Class A mask = 255.0.0.0

- Class B mask = 255.255.0.0

- Class C mask = 255.255.255.0

These masks were applied by default based on the value of the leading bits in the IP address. If an address started with a binary 0, then stations assumed Class A masking. The starting bits 10 indicated Class B, and 110 indicated Class C. Consequently, the class of addressing masking being used could be determined by looking at the first octet in the address:

- Class A starts with 0 and ends with 0111 1111 (the smallest value in the first octet is decimal 0 and the largest value is 127 yielding a potential range of 0-127).

- Class B starts with 10 and ends with 1011 1111 (the smallest value in the first octet is decimal 128 and the largest value is 191 yielding a potential range of 128-191).

- Class C starts with 110 and ends with 1101 1111 (the smallest value in the first octet is decimal 192 and the largest value is 223 yielding a potential range of 192-223).

- Class D starts with 1110 and ends with 1110 1111(the smallest value in the first octet is decimal 224 and the largest value is 239 yielding a potential range of 224-239).

- Class E starts with 1111 and ends with 1111 1111 (the smallest value in the first octet is decimal 240 and the largest value is 255 yielding a potential range of 240-255).

This division of addressing allows for the following potential number of addresses.

For example: Class A has 8 bits in the Network part and 24 bits in the Host part, meaning 28 = 128 possible values in the Network part and 224 = 16777216 possible values in the Host part.

Creating subnets

To subnet a network is to create logical divisions of the network, for example arranged on one floor, building or geographical location. Each device on each subnet is to have an address that logically associates it with the others on the same subnet. This also prevents devices on one subnet from getting confused with hosts on another subnet. Subnetting applies to IP addresses because this is done by borrowing bits from the host portion of the IP address. In a sense, the IP address has three components - the network part, the subnet part and the host part. We can create a subnet by logically grabbing the last bit from the network component of the address and using it to determine the number of subnets required.

To make learning subnetting easier see http://www.subnetting.net/Tutorial.aspx (builds up from no knowledge) and http://www.9tut.com/subnetting-tutorial (starts from knowledge about adressess).

Also, http://www.subnet-calculator.com/ and http://www.subnetmask.info/ and these mental shortcuts:

| Mask | # of subnets | SlashFmt | Class A hosts | Class A mask | Class B hosts | Class B mask | Class C hosts | Class C mask | Class C sub hosts | Class C sub mask |

|---|---|---|---|---|---|---|---|---|---|---|

| 255 | 1 or 256 | /32 | 16,777,214 | 255.0.0.0 | 65,534 | 255.255.0.0 | 254 | 255.255.255.0 | Invalid, 1 address | 255.255.255.255 |

| 254 | 128 | /31 | 33,554,430 | 254.0.0.0 | 131,070 | 255.254.0.0 | 510 | 255.255.254.0 | Invalid, 2 addresses | 255.255.255.254 |

| 252 | 64 | /30 | 67,108,862 | 252.0.0.0 | 262,142 | 255.252.0.0 | 1,022 | 255.255.252.0 | 2 hosts, 4 addresses | 255.255.255.252 |

| 248 | 32 | /29 | 134,217,726 | 248.0.0.0 | 524,286 | 255.248.0.0 | 2,046 | 255.255.248.0 | 6 hosts, 8 addresses | 255.255.255.248 |

| 240 | 16 | /28 | 268,435,454 | 240.0.0.0 | 1,048,574 | 255.240.0.0 | 4,094 | 255.255.240.0 | 14 hosts, 16 addresses | 255.255.255.240 |

| 224 | 8 | /27 | 536,870,910 | 224.0.0.0 | 2,097,150 | 255.224.0.0 | 8,190 | 255.255.224.0 | 30 hosts, 32 addresses | 255.255.255.224 |

| 192 | 4 | /26 | 1,073,741,822 | 192.0.0.0 | 4,194,302 | 255.192.0.0 | 16,382 | 255.255.192.0 | 62 hosts, 64 addresses | 255.255.255.192 |

| 128 | 2 | /25 | 2,147,483,646 | 128.0.0.0 | 8,388,606 | 255.128.0.0 | 32,766 | 255.255.128.0 | 126 hosts, 128 addresses | 255.255.255.128 |

Internet Protocol (IP)

Internet Protocol (IP) provides support at the network layer of the OSI model. All transport protocol data packets such as UDP or TCP packets are encapsulated in IP data packets to be carried from one host to another. IP is a connection-less unreliable service, meaning there is no guarantee that the data will reach the intended host. The datagrams may be damaged upon arrival, out of order, or not arrive at all. IP is defined by RFC 791. Therefore the layers above IP such as TCP are responsible for being sure correct data is delivered. IP provides for:

- Addressing

- Type of service specification

- Fragmentation and re-assembly

- Security

IP packet format

0 4 8 16 31

+--------------------------------------------------------------------------------------+

| Version | Length | Type of Service | Total Length |

|------------------------------------------|-------------------------------------------|

| Identification | Flags | Fragmentation Offset |

|------------------------------------------|-------------------------------------------|

| Time to Live | Protocol | Header Checksum |

|--------------------------------------------------------------------------------------|

| Source Address |

|--------------------------------------------------------------------------------------|

| Destination Address |

|--------------------------------------------------------------------------------------|

| Options |

|--------------------------------------------------------------------------------------|

| Data |

+--------------------------------------------------------------------------------------+

- Version (4 bits): The IP protocol version, currently 4 or 6.

- Header length (4 bits): The number of 32 bit words in the header

- Type of service (TOS) (8 bits): Only 4 bits are used which are minimize delay, maximize throughput, maximize reliability, and minimize monetary cost. Only one of these bits can be on. If all bits are off, the service is normal. Some networks allow a set precedences to control priority of messages the bits are as follows:

- Bits 0-2 - Precedence:

- 111 - Network Control

- 110 - Internetwork Control

- 101 - CRITIC/ECP

- 100 - Flash Override

- 011 - Flash

- 010 - Immediate

- 001 - Priority

- 000 - Routine

- Bit 3 - A value of 0 means normal delay. A value of 1 means low delay.

- Bit 4 - Sets throughput. A value of 0 means normal and a 1 means high throughput.

- Bit 5 - A value of 0 means normal reliability and a 1 means high reliability.

- Bit 6-7 are reserved for future use.

- Bits 0-2 - Precedence:

- Total length of the IP data message in bytes (16 bits)

- Identification (16 bits) - Uniquely identifies each datagram. This is used to re-assemble the datagram. Each fragment of the datagram contains this same unique number.

- Flags (3 bits): One bit is the more fragments bit

- Bit 0 - reserved.

- Bit 1 - The fragment bit. A value of 0 means the packet may be fragmented while a 1 means it cannot be fragmented. If this value is set and the packet needs further fragmentation, an ICMP error message is generated.

- Bit 2 - This value is set on all fragments except the last one since a value of 0 means this is the last fragment.

- Fragment offset (13 bits): The offset in 8 byte units of this fragment from the beginning of the original datagram.

- Time to live (TTL) (8 bits): Limits the number of routers the datagram can pass through. Usually set to 32 or 64. Every time the datagram passes through a router this value is decremented by a value of one or more. This is to keep the datagram from circulating in an infinite loop forever.

- Protocol (8 bits): Identifies which protocol is encapsulated in the next data area. This is may be one or more of TCP(6), UDP(17), ICMP(1), IGMP(2), or OSPF(89). A list of these protocols and their associated numbers may be found in the /etc/protocols file on Unix or Linux systems.

- Header checksum (16 bits): For the IP header, not including the options and data.

- Source IP address (32 bits): The IP address of the card sending the data.

- Destination IP address (32 bits): The IP address of the network card the data is intended for.

- Options:

- Security and handling restrictions

- Record route - Each router records its IP address

- Time stamp - Each router records its IP address and time

- Loose source routing - Specifies a set of IP addresses the datagram must go through.

- Strict source routing - The datagram can go through only the IP addresses specified.

- Data: Encapsulated hardware data such as ethernet data.

The message order of bits transmitted is 0-7, then 8-15, in network byte order. Fragmentation is handled at the IP network layer and the messages are reassembled when they reach their final destination. If one fragment of a datagram is lost, the entire datagram must be retransmitted. This is why fragmentation is avoided by TCP. The data on the last line is ethernet data, or data depending on the type of physical network.

Type of service specification

RFC 791 defined a field within the IP header called the Type Of Service (TOS) byte. This field is used to specify the quality of service desired for the datagram and is a mix of factors. These factors include fields such as Precedence, Speed, Throughput and Reliability. In normal conversations you would not use any such special alternatives, so the Type of Service byte typically would be set to zero. With the advent of multimedia transmission and emergence of protocols such as Session Initiation Protocol (SIP), this field is coming into use.

The IP Type of Service Byte:

0 1 2 3 4 5 6 7

+------------------------------------------------+

| Precedence | D | T | R | 0 | 0 |

+------------------------------------------------+

- Bits 0-2: Precedence.

- Bit 3: Delay (0 = Normal Delay, 1 = Low Delay)

- Bit 4: Throughput (0 = Normal Throughput, 1 = High Throughput)

- Bit 5: Reliability (0 = Normal Reliability, 1 = High Reliability)

- Bits 6-7: Reserved for Future Use.

The three bit Precedence field is defined as follows:

| Precedence bits | Definition |

|---|---|

| 111 | Network Control |

| 110 | Internetwork Control |

| 101 | CRITIC/ECP |

| 100 | Flash Overrride |

| 011 | Flash |

| 010 | Immediate |

| 001 | Priority |

| 000 | Routine |

Session Initiation Protocol (SIP)

This protocol is an application-layer control protocol used for creating, modifying and terminating sessions with one or more participants. Some examples of such activities include Internet multimedia conferences, Internet telephone calls and multimedia distribution. SIP is intended to support communications using Multicast, a mesh of Unicast relations, or a combination of both.

Fragmentation and reassembly

There are a number of deferring network transmission architectures, with each having a physical limit of the number of data bytes that may be contained within a given frame. This physical limit is described in numerous specifications and is referred to as the Maximum Transmission Unit (MTU) of the network. As a block of data is prepared for transmission, the sending or forwarding device examines the MTU for the network the data is to be sent or forwarded across. If the size of the block of data is less then the MTU for that Network, the data is transmitted in accordance with the rules for that particular network.

There are two situations in which MTU becomes important:

- The size of the block of data being transmitted is greater than the MTU.

- Data must traverse across multiple network architectures, each with a different MTU.

IPv4 Fragmentation Fields

The three fields concerned with IP Fragmentation are:

| Field Name | Function | Offset Location | Alias |

|---|---|---|---|

| Identification | 16-bit field containing a unique number used to identify the frame and any associated fragments for reassembly. | 18-19 | Identification Field |

| Flags | 3-bit field containing the flags that specify the function of the frame in terms of whether fragmentation has been employed, additional fragments are coming, or this is the final fragment. | 20 | Fragmentation Flags |

| Fragmentation Offset | 13-bit field indicating the position of a particular fragment's data in relation to the first byte of data (offset 0). | 20-21 | Fragment Offset |

Identification

With increasing interconnection and complexity of networks, fragments from multiple blocks of data might travel along different paths to the destination, possibly arriving out of sequence in relation to one another. That is, a fragment from block number one might arrive intermixed with the data stream for block number 2 or vice versa. The function of the Fragment Offset Field is to identify the relative position of each fragment, and it is the Identification Field that serves to allow the receiving device to sort out which fragments comprise what block of data. Each fragment from a particular data stream will have the same Identification Field, uniquely identifying which block it belongs to. If one or more fragments are lost, the buffer of the device performing the reassembly process will time out and discard all of the fragments. In the event of such a time out, the data will then have to be retransmitted by the sending device.

Flags

| Bit Indicator | Definition |

|---|---|

| 0xx | Reserved |

| x0x | May fragment |

| x1x | Do not fragment |

| xx0 | Last fragment |

| xx1 | More fragments |

When a receiving station processes each frame, one of the operations it performs is to review the Flags field. Depending on the value indicated by this field, several possible actions are then initiated, including:

- (xx1) More Fragments - Indicates that there are additional IP Fragments that comprise the data associated with that specific Identification Field. The receiving device will allocate buffer resources for reassembly and pass all frames containing that unique Identification Field to the buffer.

- (xx0) Last Fragment - Indicates that this fragment is the final frame for the data block identified by the Identification Field. The receiving device will now attempt to reassemble the fragments in the order specified by the Fragment Offset field.

Fragment Offset

Because it is possible that the fragments that comprise a block of data might travel along different paths to the destination, it is possible they might arrive out of sequence. While the Identification Field serve to mark which IP fragments belong to which block of data, it is the Fragment Offset Field, sometimes referred to as the Fragmentation Offset Field, that tells the receiving device which order to reassemble them in.

During the IP Fragmentation Reassembly process, if a particular fragment is found to be missing, as indicated by the Fragmentation Offset count, the buffer will enter a wait state until either the missing piece(s) are received or a time out occurs. In the event of such a time out, the buffer simply discards the fragments.

IP Fragmentation

Regardless of what situation occurs that requires IP Fragmentation, the procedure followed by the device performing the fragmentation must be as follows:

- The device attempting to transmit the block of data will first examine the Flag field to see if the field is set to the value of (x0x or x1x). If the value is equal to (x1x) this indicates that the data may not be fragmented, forcing the transmitting device to discard that data. Depending on the specific configuration of the device, an Internet Control Message Protocol (ICMP) Destination Unreachable -> Fragmentation required and Do Not Fragment Bit Set message may be generated.

- Assuming the flag field is set to (x0x), the device computes the number of fragments required to transmit the amount of data in by dividing the amount of data by the MTU. This will result in "X" number of frames with all but the final frame being equal to the MTU for that network.

- It will then create the required number of IP packets and copies the IP header into each of these packets so that each packet will have the same identifying information, including the Identification Field.

- The Flag field in the first packet, and all subsequent packets except the final packet, will be set to "More Fragments." The final packets Flag Field will instead be set to "Last Fragment."

- The Fragment Offset will be set for each packet to record the relative position of the data contained within that packet.

- The packets will then be transmitted according to the rules for that network architecture.

IP Fragment Reassembly

If a receiving device detects that IP Fragmentation has been employed, the procedure followed by the device performing the Reassembly must be as follows:

- The device receiving the data detects the Flag Field set to "More Fragments".

- It will then examine all incoming packets for the same Identification number contained in the packet.

- It will store all of these identified fragments in a buffer in the sequence specified by the Fragment Offset Field.

- Once the final fragment, as indicated by the Flag Field, is set to "Last Fragment," the device will attempt to reassemble that data in offset order.

- If reassembly is successful, the packet is then sent to the ULP in accordance with the rules for that device.

- If reassembly is unsuccessful, perhaps due to one or more lost fragments, the device will eventually time out and all of the fragments will be discarded.

- The transmitting device will than have to attempt to retransmit the data in accordance with its own procedures.

Across networks

Imagine a block of data originating on a 16Mb Token Ring network (MTU = 17914B) that is connected to another 16Mb Token Ring network (MTU = 17914B) via an Ethernet network (MTU = 1500B). The data block met the MTU restriction for a 16Mb Token Ring Network, but the Router connecting the Token Ring to the Ethernet Network is faced with having to forward this large block onto a network with a smaller MTU. It will simply follow the rules for IP Fragmentation as if was transmitting the frame itself except that the Identification Field will be that of the original frame.

Once the data reaches the router on the other end of the Ethernet network, it will perform reassembly of the fragments exactly as previously described and pass the reassembled block of data onto the network with the new MTU.

Security

IP is responsible for the transmission of packets between network end points. IP includes some features which provide basic measures of fault-tolerance (time to live, checksum), traffic prioritization (type of service) and support for the fragmentation of larger packets into multiple smaller packets (ID field, fragment offset). The support for fragmentation of larger packets provides a protocol allowing routers to fragment a packet into smaller packets when the original packet is too large for the supporting datalink frames. IP fragmentation exploits (attacks) use the fragmentation protocol within IP as an attack vector [5][6].

The IPv4 Fragmentation and Reassembly can also be used to trigger a Denial of Service Attack (DOS). The receiving device will attempt reassembly following receipt of a frame containing a Flag field set to (xx1), indicating more fragments are to follow. Receipt of such a frame causes the receiving device to allocate buffer resources for reassembly. If a device is flooded with separate frames, each with the Flag field set to (xx1), but each has the Identification Field set to a different value, the device would attempt to allocate resources to each separate fragment in preparation for reassembly and would quickly exhaust its available resources while waiting for buffer time-outs to occur. To defend against such DOS attempts, many network security features include specific rules implemented at the Firewall that change the time-out value for how long they will hold incoming fragments before discarding them.

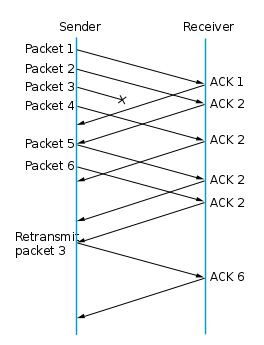

Transmission Control Protocol (TCP)

Transmission Control Protocol (TCP) supports the network at the transport layer. Transmission Control Protocol (TCP) provides a reliable connection oriented service. Connection oriented means both the client and server must open the connection before data is sent. TCP is defined by RFC 793 and RFC 1122. TCP provides:

- End-to-end reliability

- Flow control

- Congestion control

TCP relies on the IP service at the network layer to deliver data to the host. Since IP is not reliable with regard to message quality or delivery, TCP must make provisions to make sure messages are delivered on time and correctly.

TCP segment format

0 16 31

+-----------------------------------------------------------------------------------------------------------+

| Source port | Destination port |

|-----------------------------------------------------------------------------------------------------------|

| Sequence number |

|-----------------------------------------------------------------------------------------------------------|

| Acknowledgement number |

|-----------------------------------------------------------------------------------------------------------|

| hlen | Reserved | URG | ACK | PSH | RST | SYN | FIN | Window size |

|-----------------------------------------------------------------------------------------------------------|

| Checksum | Urgent pointer |

|-----------------------------------------------------------------------------------------------------------|

| Options | Padding |

|-----------------------------------------------------------------------------------------------------------|

| Data |

+-----------------------------------------------------------------------------------------------------------+

- Source port number (16 bits)

- Destination port number (16 bits)

- Sequence number (32 bits): The byte in the data stream that the first byte of this packet represents.

- Acknowledgement number (32 bits): Contains the next sequence number that the sender of the acknowledgement expects to receive which is the sequence number plus 1 (plus the number of bytes received in the last message?). This number is used only if the ACK flag is on.

- Header length (4 bits): The length of the header in 32 bit words, required since the options field is variable in length.

- Reserved (6 bits)

- Flags:

- URG (1 bit) - The urgent pointer is valid.

- ACK (1 bit) - Makes the acknowledgement number valid.

- PSH (1 bit) - High priority data for the application.

- RST (1 bit) - Reset the connection.

- SYN (1 bit) - Turned on when a connection is being established and the sequence number field will contain the initial sequence number chosen by this host for this connection.

- FIN (1 bit) - The sender is done sending data.

- Window size (16 bits): The maximum number of bytes that the receiver will to accept.

- TCP checksum (16 bits): Calculated over the TCP header, data, and TCP pseudo header.

- Urgent pointer (16 bits): Only valid if the URG bit is set. The urgent mode is a way to transmit emergency data to the other side of the connection. It must be added to the sequence number field of the segment to generate the sequence number of the last byte of urgent data.

- Options (0 or more 32 bit words)

- Data (optional)

End-to-end reliability

In order for two hosts to communicate using TCP they must first establish a connection by exchanging messages in what is known as the three-way handshake:

Host A Host B

In the network

Send SYN SEQ=x | --------------------> | Receive SYN

| |

Receive SYN + ACK | <-------------------- | Send SYN SEQ=y, ACK x+1

| |

Send ACK y+1 | --------------------> | Receive ACK

- Host A initiates the connection by sending a TCP segment with the SYN control bit set and an initial sequence number (ISN) we represent as the variable x in the sequence number field.

- Host B receives this SYN segment at some point in time, processes it and responds with a TCP segment of its own. The response from Host B contains the SYN control bit set and its own ISN represented as variable y. Host B also sets the ACK control bit to indicate the next expected byte from Host A should contain data starting with sequence number x+1.

- When Host A receives Host B's ISN and ACK, it finishes the connection establishment phase by sending a final acknowledgement segment to Host B. In this case, Host A sets the ACK control bit and indicates the next expected byte from Host B by placing acknowledgement number y+1 in the acknowledgement field.

Once ISNs have been exchanged, communicating applications can transmit data between each other.

In order for a connection to be released, four segments are required to completely close a connection. Four segments are necessary due to the fact that TCP is a full-duplex protocol, meaning that each end must shut down independently:

Host A Host B

In the network

Send FIN SEQ=x | --------------------> | Receive FIN

| |